diff --git a/docs/docs/guides/providers/image.png b/docs/docs/guides/providers/image.png

deleted file mode 100644

index 5f1f7104e..000000000

Binary files a/docs/docs/guides/providers/image.png and /dev/null differ

diff --git a/docs/docs/guides/providers/tensorrt-llm.md b/docs/docs/guides/providers/tensorrt-llm.md

index b0485fd57..3526ef25d 100644

--- a/docs/docs/guides/providers/tensorrt-llm.md

+++ b/docs/docs/guides/providers/tensorrt-llm.md

@@ -15,72 +15,197 @@ slug: /guides/providers/tensorrt-llm

-Users with Nvidia GPUs can get **20-40% faster\* token speeds** on their laptop or desktops by using [TensorRT-LLM](https://github.com/NVIDIA/TensorRT-LLM). The greater implication is that you are running FP16, which is also more accurate than quantized models.

+:::info

-This guide walks you through how to install Jan's official [TensorRT-LLM Extension](https://github.com/janhq/nitro-tensorrt-llm). This extension uses [Nitro-TensorRT-LLM](https://github.com/janhq/nitro-tensorrt-llm) as the AI engine, instead of the default [Nitro-Llama-CPP](https://github.com/janhq/nitro). It includes an efficient C++ server to natively execute the [TRT-LLM C++ runtime](https://nvidia.github.io/TensorRT-LLM/gpt_runtime.html). It also comes with additional feature and performance improvements like OpenAI compatibility, tokenizer improvements, and queues.

+TensorRT-LLM support was launched in 0.4.9, and should be regarded as an Experimental feature.

-\*Compared to using LlamaCPP engine.

-

-:::warning

-This feature is only available for Windows users. Linux is coming soon.

-

-Additionally, we only prebuilt a few demo models. You can always build your desired models directly on your machine. [Read here](#build-your-own-tensorrt-models).

+- Only Windows is supported for now.

+- Please report bugs in our Discord's [#tensorrt-llm](https://discord.com/channels/1107178041848909847/1201832734704795688) channel.

:::

+Jan supports [TensorRT-LLM](https://github.com/NVIDIA/TensorRT-LLM) as an alternate Inference Engine, for users who have Nvidia GPUs with large VRAM. TensorRT-LLM allows for blazing fast inference, but requires Nvidia GPUs with [larger VRAM](https://nvidia.github.io/TensorRT-LLM/memory.html).

+

+## What is TensorRT-LLM?

+

+[TensorRT-LLM](https://github.com/NVIDIA/TensorRT-LLM) is an hardware-optimized LLM inference engine for Nvidia GPUs, that compiles models to run extremely fast on Nvidia GPUs.

+- Mainly used on Nvidia's Datacenter-grade GPUs like the H100s [to produce 10,000 tok/s](https://nvidia.github.io/TensorRT-LLM/blogs/H100vsA100.html).

+- Can be used on Nvidia's workstation (e.g. [A6000](https://www.nvidia.com/en-us/design-visualization/rtx-6000/)) and consumer-grade GPUs (e.g. [RTX 4090](https://www.nvidia.com/en-us/geforce/graphics-cards/40-series/rtx-4090/))

+

+:::tip[Benefits]

+

+- Our performance testing shows 20-40% faster token/s speeds on consumer-grade GPUs

+- On datacenter-grade GPUs, TensorRT-LLM can go up to 10,000 tokens/s

+- TensorRT-LLM is a relatively new library, that was [released in Sept 2023](https://github.com/NVIDIA/TensorRT-LLM/graphs/contributors). We anticipate performance and resource utilization improvements in the future.

+

+:::

+

+:::warning[Caveats]

+

+- TensorRT-LLM requires models to be compiled into GPU and OS-specific "Model Engines" (vs. GGUF's "convert once, run anywhere" approach)

+- TensorRT-LLM Model Engines tend to utilize larger amount of VRAM and RAM in exchange for performance

+- This usually means only people with top-of-the-line Nvidia GPUs can use TensorRT-LLM

+

+:::

+

+

## Requirements

-- A Windows PC

+### Hardware

+

+- Windows PC

- Nvidia GPU(s): Ada or Ampere series (i.e. RTX 4000s & 3000s). More will be supported soon.

- 3GB+ of disk space to download TRT-LLM artifacts and a Nitro binary

-- Jan v0.4.9+ or Jan v0.4.8-321+ (nightly)

-- Nvidia Driver v535+ ([installation guide](https://jan.ai/guides/common-error/not-using-gpu/#1-ensure-gpu-mode-requirements))

-- CUDA Toolkit v12.2+ ([installation guide](https://jan.ai/guides/common-error/not-using-gpu/#1-ensure-gpu-mode-requirements))

-## Install TensorRT-Extension

+**Compatible GPUs**

+

+| Architecture | Supported? | Consumer-grade | Workstation-grade |

+| ------------ | --- | -------------- | ----------------- |

+| Ada | ✅ | 4050 and above | RTX A2000 Ada |

+| Ampere | ✅ | 3050 and above | A100 |

+| Turing | ❌ | Not Supported | Not Supported |

+

+:::info

+

+Please ping us in Discord's [#tensorrt-llm](https://discord.com/channels/1107178041848909847/1201832734704795688) channel if you would like Turing support.

+

+:::

+

+### Software

+

+- Jan v0.4.9+ or Jan v0.4.8-321+ (nightly)

+- [Nvidia Driver v535+](https://jan.ai/guides/common-error/not-using-gpu/#1-ensure-gpu-mode-requirements)

+- [CUDA Toolkit v12.2+](https://jan.ai/guides/common-error/not-using-gpu/#1-ensure-gpu-mode-requirements)

+

+## Getting Started

+

+### Install TensorRT-Extension

1. Go to Settings > Extensions

-2. Click install next to the TensorRT-LLM Extension

-3. Check that files are correctly downloaded

+2. Install the TensorRT-LLM Extension

+

+:::info

+You can check if files have been correctly downloaded:

```sh

ls ~\jan\extensions\@janhq\tensorrt-llm-extension\dist\bin

-# Your Extension Folder should now include `nitro.exe`, among other artifacts needed to run TRT-LLM

+# Your Extension Folder should now include `nitro.exe`, among other `.dll` files needed to run TRT-LLM

```

-

-## Download a Compatible Model

-

-TensorRT-LLM can only run models in `TensorRT` format. These models, aka "TensorRT Engines", are prebuilt specifically for each target OS+GPU architecture.

-

-We offer a handful of precompiled models for Ampere and Ada cards that you can immediately download and play with:

-

-1. Restart the application and go to the Hub

-2. Look for models with the `TensorRT-LLM` label in the recommended models list. Click download. This step might take some time. 🙏

-

-

-

-3. Click use and start chatting!

-4. You may need to allow Nitro in your network

-

-

-

-:::warning

-If you are our nightly builds, you may have to reinstall the TensorRT-LLM extension each time you update the app. We're working on better extension lifecyles - stay tuned.

:::

-## Configure Settings

+### Download a TensorRT-LLM Model

-You can customize the default parameters for how Jan runs TensorRT-LLM.

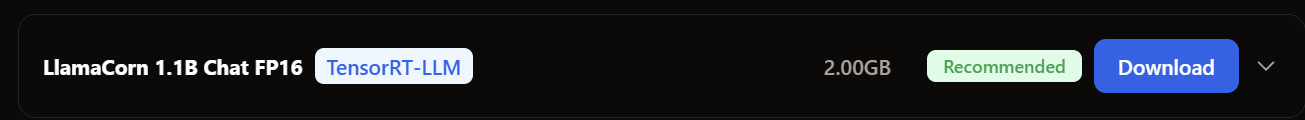

+Jan's Hub has a few pre-compiled TensorRT-LLM models that you can download, which have a `TensorRT-LLM` label

-:::info

+- We automatically download the TensorRT-LLM Model Engine for your GPU architecture

+- We have made a few 1.1b models available that can run even on Laptop GPUs with 8gb VRAM

+

+

+| Model | OS | Ada (40XX) | Ampere (30XX) | Description |

+| ------------------- | ------- | ---------- | ------------- | --------------------------------------------------- |

+| Llamacorn 1.1b | Windows | ✅ | ✅ | TinyLlama-1.1b, fine-tuned for usability |

+| TinyJensen 1.1b | Windows | ✅ | ✅ | TinyLlama-1.1b, fine-tuned on Jensen Huang speeches |

+| Mistral Instruct 7b | Windows | ✅ | ✅ | Mistral |

+

+### Importing Pre-built Models

+

+You can import a pre-built model, by creating a new folder in Jan's `/models` directory that includes:

+

+- TensorRT-LLM Engine files (e.g. `tokenizer`, `.engine`, etc)

+- `model.json` that registers these files, and specifies `engine` as `nitro-tensorrt-llm`

+

+:::note[Sample model.json]

+

+Note the `engine` is `nitro-tensorrt-llm`: this won't work without it!

+

+```js

+{

+ "sources": [

+ {

+ "filename": "config.json",

+ "url": "https://delta.jan.ai/dist/models///tensorrt-llm-v0.7.1/TinyJensen-1.1B-Chat-fp16/config.json"

+ },

+ {

+ "filename": "mistral_float16_tp1_rank0.engine",

+ "url": "https://delta.jan.ai/dist/models///tensorrt-llm-v0.7.1/TinyJensen-1.1B-Chat-fp16/mistral_float16_tp1_rank0.engine"

+ },

+ {

+ "filename": "tokenizer.model",

+ "url": "https://delta.jan.ai/dist/models///tensorrt-llm-v0.7.1/TinyJensen-1.1B-Chat-fp16/tokenizer.model"

+ },

+ {

+ "filename": "special_tokens_map.json",

+ "url": "https://delta.jan.ai/dist/models///tensorrt-llm-v0.7.1/TinyJensen-1.1B-Chat-fp16/special_tokens_map.json"

+ },

+ {

+ "filename": "tokenizer.json",

+ "url": "https://delta.jan.ai/dist/models///tensorrt-llm-v0.7.1/TinyJensen-1.1B-Chat-fp16/tokenizer.json"

+ },

+ {

+ "filename": "tokenizer_config.json",

+ "url": "https://delta.jan.ai/dist/models///tensorrt-llm-v0.7.1/TinyJensen-1.1B-Chat-fp16/tokenizer_config.json"

+ },

+ {

+ "filename": "model.cache",

+ "url": "https://delta.jan.ai/dist/models///tensorrt-llm-v0.7.1/TinyJensen-1.1B-Chat-fp16/model.cache"

+ }

+ ],

+ "id": "tinyjensen-1.1b-chat-fp16",

+ "object": "model",

+ "name": "TinyJensen 1.1B Chat FP16",

+ "version": "1.0",

+ "description": "Do you want to chat with Jensen Huan? Here you are",

+ "format": "TensorRT-LLM",

+ "settings": {

+ "ctx_len": 2048,

+ "text_model": false

+ },

+ "parameters": {

+ "max_tokens": 4096

+ },

+ "metadata": {

+ "author": "LLama",

+ "tags": [

+ "TensorRT-LLM",

+ "1B",

+ "Finetuned"

+ ],

+ "size": 2151000000

+ },

+ "engine": "nitro-tensorrt-llm"

+}

+```

+

+:::

+

+### Using a TensorRT-LLM Model

+

+You can just select and use a TensorRT-LLM model from Jan's Thread interface.

+- Jan will automatically start the TensorRT-LLM model engine in the background

+- You may encounter a pop-up from Windows Security, asking for Nitro to allow public and private network access

+

+:::info[Why does Nitro need network access?]

+

+- This is because Jan runs TensorRT-LLM using the [Nitro Server](https://github.com/janhq/nitro-tensorrt-llm/)

+- Jan makes network calls to the Nitro server running on your computer on a separate port

+

+:::

+

+### Configure Settings

+

+:::note

coming soon

:::

## Troubleshooting

-### Incompatible Extension vs Engine versions

+## Extension Details

-For now, the model versions are pinned to the extension versions.

+Jan's TensorRT-LLM Extension is built on top of the open source [Nitro TensorRT-LLM Server](https://github.com/janhq/nitro-tensorrt-llm), a C++ inference server on top of TensorRT-LLM that provides an OpenAI-compatible API.

+

+### Manual Build

+

+To manually build the artifacts needed to run the server and TensorRT-LLM, you can reference the source code. [Read here](https://github.com/janhq/nitro-tensorrt-llm?tab=readme-ov-file#quickstart).

### Uninstall Extension

@@ -89,11 +214,8 @@ For now, the model versions are pinned to the extension versions.

3. Delete the entire Extensions folder.

4. Reopen the app, only the default extensions should be restored.

-### Install Nitro-TensorRT-LLM manually

-To manually build the artifacts needed to run the server and TensorRT-LLM, you can reference the source code. [Read here](https://github.com/janhq/nitro-tensorrt-llm?tab=readme-ov-file#quickstart).

-

-### Build your own TensorRT models

+## Build your own TensorRT models

:::info

coming soon

diff --git a/docs/docs/integrations/tensorrt.md b/docs/docs/integrations/tensorrt.md

deleted file mode 100644

index 8a77d1436..000000000

--- a/docs/docs/integrations/tensorrt.md

+++ /dev/null

@@ -1,8 +0,0 @@

----

-title: TensorRT-LLM

----

-

-## Quicklinks

-

-- Jan Framework [Extension Code](https://github.com/janhq/jan/tree/main/extensions/inference-triton-trtllm-extension)

-- TensorRT [Source URL](https://github.com/NVIDIA/TensorRT-LLM)

diff --git a/docs/docusaurus.config.js b/docs/docusaurus.config.js

index 761e741db..60d2cd7af 100644

--- a/docs/docusaurus.config.js

+++ b/docs/docusaurus.config.js

@@ -117,6 +117,10 @@ const config = {

from: '/guides/using-extensions/',

to: '/guides/extensions/',

},

+ {

+ from: '/integrations/tensorrt',

+ to: '/guides/providers/tensorrt-llm'

+ },

],

},

],